Conceptual framework

TrichomesAI is a physical-digital installation that explores the interaction between plants, humans, and artificial intelligence.

Plants are complex ecosystems with imperceptible communication strategies whose ultimate goal is adaptation and survival. This becomes even more evident when human presence enters the equation. Since the dawn of Earth, plants and humans have coexisted. However, now more than ever, human interaction with nature is growing increasingly distant.

Technology is often identified as the primary catalyst for the shift to the digital Anthropocene era we currently inhabit. But what if we could use technology to reverse this process? How might artificial intelligence forge new pathways for interaction between plants and humans?

With this perspective, TrichomesAI aims to create an intimate and individual artistic experience. The installation encourages visitors to sit back and listen to plants, interact with the surrounding space, and reconnect with nature through the subtle mediation of technology.

Design | How it works

TrichomesAI features a modular design that can be easily adapted to various venues.

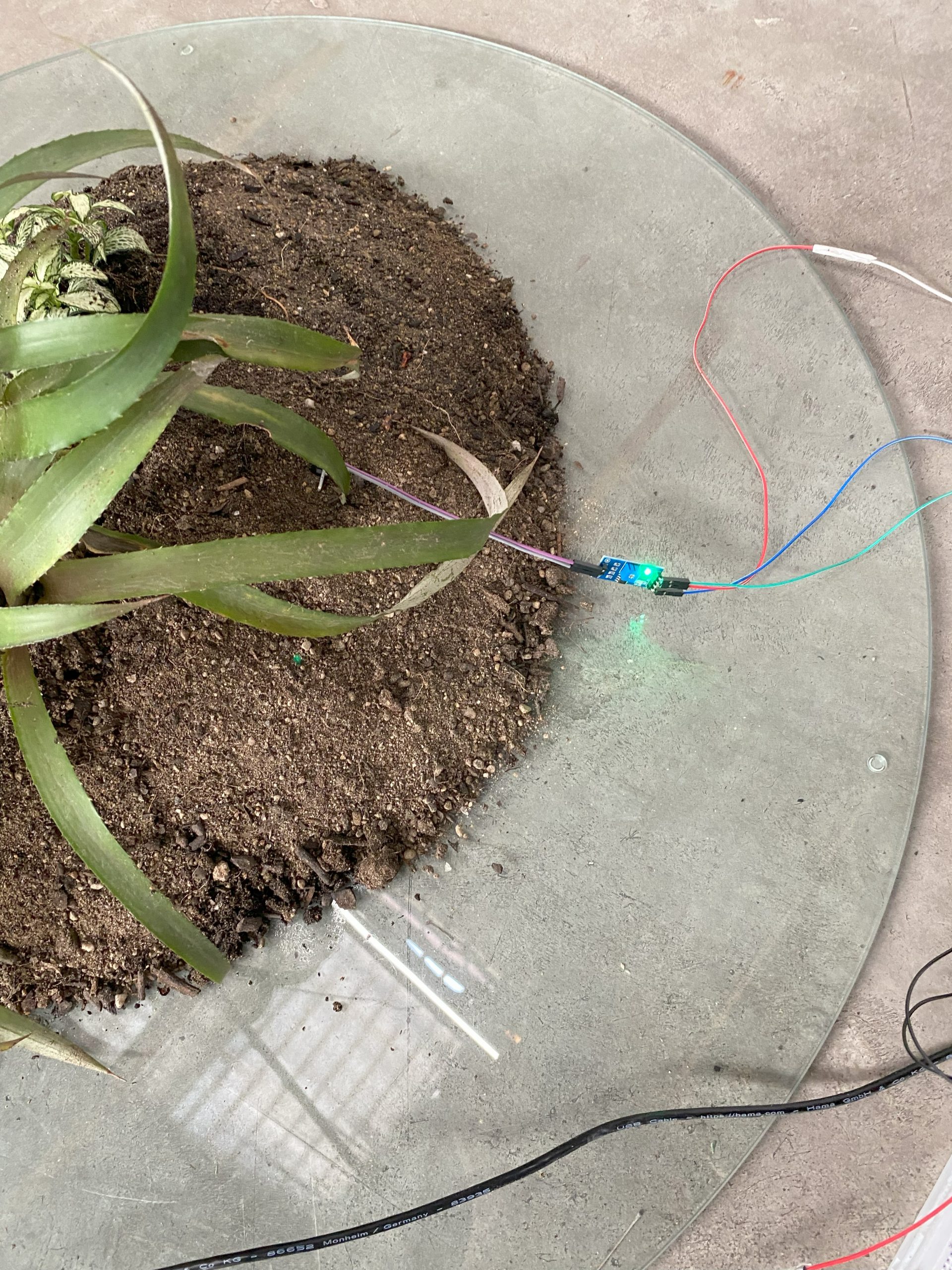

In its basic configuration, the installation consists of three glass structures covered with soil and plants. Visitors are invited to sit in the center, between these structures, and interact. There are no strict rules, only suggestions: watering the plants, gently touching their leaves, playing with the soil, or moving stones around the structures.

All visitor actions, combined with data from the plants, feed into the audiovisual system. Screens placed around the installation display the visual output, while visitors experience the soundscape through headphones.

TrichomesAI strives to create an intimate and individual experience, an isolationist reflection on nature and technology.

Art | Technology

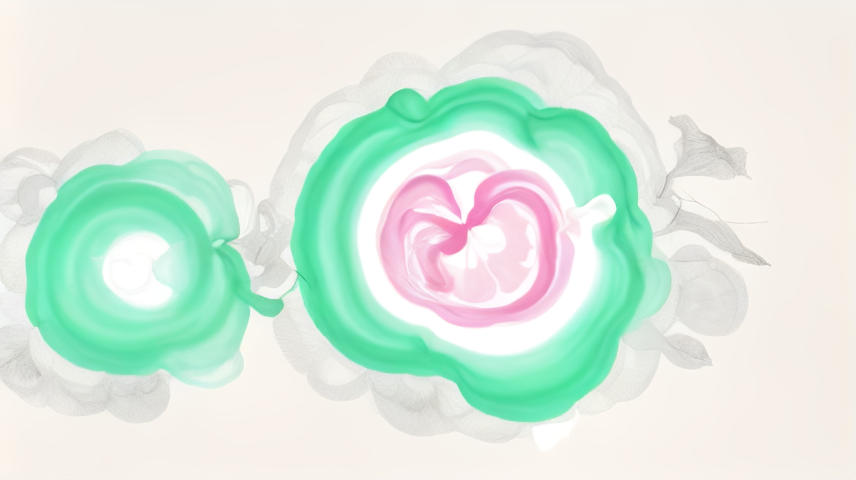

The foundation of the installation is a custom machine learning model trained on high-resolution scans of abstract paintings.

The installation space is equipped with sensors that monitor both plant data – light levels, humidity, soil moisture, temperature, and pressure – and visitor data including spatial position, hand movements, and specific actions (such as watering plants, moving rocks, touching leaves, etc.).

The visual system begins with high-resolution scans of abstract paintings and progressively generates motion visuals whose features are controlled by plant and visitor data. A custom machine learning algorithm applies changes to these motion visuals, thus creating a flux. Additionally, every minute the system captures a snapshot of the visual output and feeds it back into the machine learning system for further training and development.

The audio system is based on a virtual granular synthesizer running in a tape loop. Plant and visitor data control synthesizer parameters such as grain size, grain position, tape speed, and others. The result is an ever-changing and evolving soundscape.

Technical specifications

TrichomesAI is developed using TouchDesigner, Python, ESP32 sensors, StreamDiffusion, and custom LoRa models.

Team

- Luz Valencia: art

- Guillermo Maglio: design

- Gianmaria Vernetti: technology